An engineering perspective

Fig. 1: Swarmbots: self-configurable robots. A notable example of collective intelligence. |

From an engineering and strictly pragmatic point of view, AI is of value simply for its capabilities and performance, independently of the methods and mechanisms used to produce them.

The point of view is therefore emulationist and not simulationist: the idea behind it is to build machines that do not necessarily simulate and reproduce the behavior of the human mind, but are simply able to emulate it selectively, as the final result of several operations. This approach has certainly been dominant in the history of AI and it has

led to the production of programs which reach a high level of competence in knowledge and

the resolution of problems considered complex. Such programs are built as manipulators of formal not-interpreted symbols, so the machine can be conceived simply as a syntactic transformer with no effective semantic understanding of the problem.

Basic Architecture of Artificial Intelligence Systems

Software applications behind AI systems are not sets of unchangeable information representing the solution to a problem,

but an environment where a basic knowledge is represented, used and modified. The system examines a broad range of

possibilities and it builds a solution dynamically. Every system of this kind must be able to express two kinds of knowledge in a

separate and modular way: a knowledge base and an

inference

For knowledge base, we mean the module that collects the knowledge on a domain,

meaning the problem. We can detail the knowledge base by dividing it into two

separate blocks: a) the block of statements and facts (temporary or short term

memory), b) the block of relations and rules (long term memory). Temporary

memory contains declarative knowledge of a particular problem to solve. On the

one hand we have a representation built by true facts introduced at the

beginning of consultation or proved to be true by the system during the work session. On the other

hand, the long-term memory stores the rules providing advice, suggestions and strategic guidelines to build up the store of knowledge available to solve a problem. The rules are built by statements composed of two units. The first is called antecedent and it expresses a condition, the second is called consequent, and it starts the action to be applied in case the antecedent is proved true. The general syntax is therefore: IF antecedent THEN consequent.

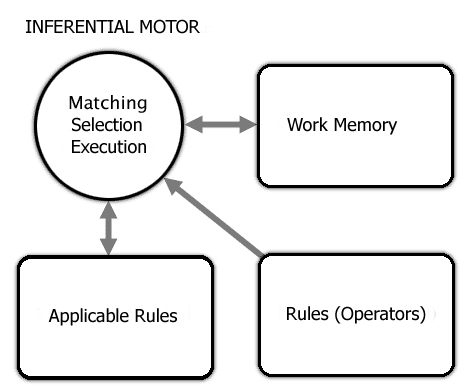

The inference engine is the module which uses the knowledge base to reach a solution to the problem and to provide explanations. The inference engine is delegated the choice of which knowledge should be used in every specific moment of the process of solution.

Each rule of the set representing the domain of knowledge, to be proven valid in a specific situation, must be compared with a set of facts representing present knowledge on the current case, and thus be satisfied. This is done through a matching operation where the antecedent of the rule and the different facts present in the temporary memory are compared. If the matching succeeds, the system proceeds with the series of actions listed in the consequent. If the latter contains a conclusion, the satisfaction of the antecedent enables to confirm such a statement as a new fact in the short-term memory. The matching operation creates inferential chains, indicating the way the system uses the rules to perform new inferences.

![]() engine.

engine.

There are two main ways of creating inferential chains starting from a set of rules:

- forward chaining. Such a method strives to reach a conclusion starting from the facts present in the temporary memory at the beginning of the process and applying the production rules forward. Inference is said to be guided by the antecedent, since the research for rules to be applied is based on matching different facts present in the memory and those logically combined in the antecedent of the active rule;

- backward chaining. In this case the processor proceeds by reducing the main goal into smaller problems. Once the thesis to be proven is identified, production rules are applied backwards, in search of coherence with the initial data. The interpreter researches a rule, if such a rule exists, containing as its consequent the statement which it must test for truth, then it checks the smaller sub-goals constituting the antecedent of the rule identified. In such cases the inference is guided by the consequent.